Published: April 10, 2026 | Category: Technology & AI News | Reading Time: 8 min

Keywords: YouTube Shorts AI, YouTube AI Avatar, AI Deepfake Shorts, YouTube Shorts AI Feature, AI Content Creation, Digital Clone Creator, AI-Generated Video

The Breaking News That Has Everyone Talking

YouTube just dropped a bombshell. As of April 9, 2026, creators can now build photorealistic AI avatars of themselves — digital clones that look and sound exactly like them — and use those clones to star in YouTube Shorts.

This is not a concept video.

This is not a beta buried in settings.

This is live, rolling out globally (except Europe), and available to anyone 18 or older with a YouTube channel.

One line sums up why this matters: YouTube Shorts AI has made it easy to deepfake yourself.

The feature was first teased by YouTube CEO Neal Mohan back in January 2026.

Now it is real, powered by Google’s Veo text-to-video technology and integrated directly into the YouTube app and YouTube Create.

The reaction is split.

Creators see a massive YouTube Shorts AI productivity tool. Critics see a Pandora’s box of misinformation risks. Both sides have a point.

What Exactly Did YouTube Announce?

YouTube introduced a built-in YouTube Shorts AI avatar creator tool that lets users generate a digital version of themselves for use in Shorts.

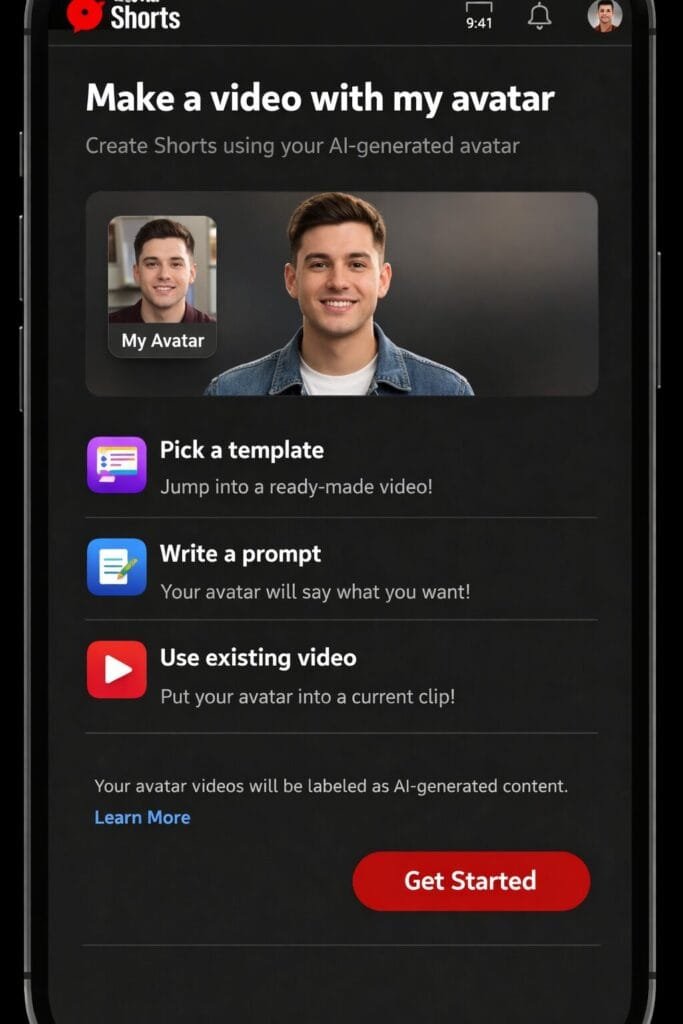

The tool sits inside the Create (+) button in the YouTube app — tap the Gemini spark icon in the corner, select “Create video,” and you will see the option “Make a video with my avatar.”

The goal, according to YouTube, is to lower the barrier to content creation.

Not everyone has a camera setup, lighting rig, or confidence to appear on screen.

The YouTube Shorts AI avatar system solves that by letting a text prompt do the work.

This is not just about convenience.

It is about fundamentally changing who can create video content and how fast they can do it.

How the AI Avatar System Works

The creation process is straightforward:

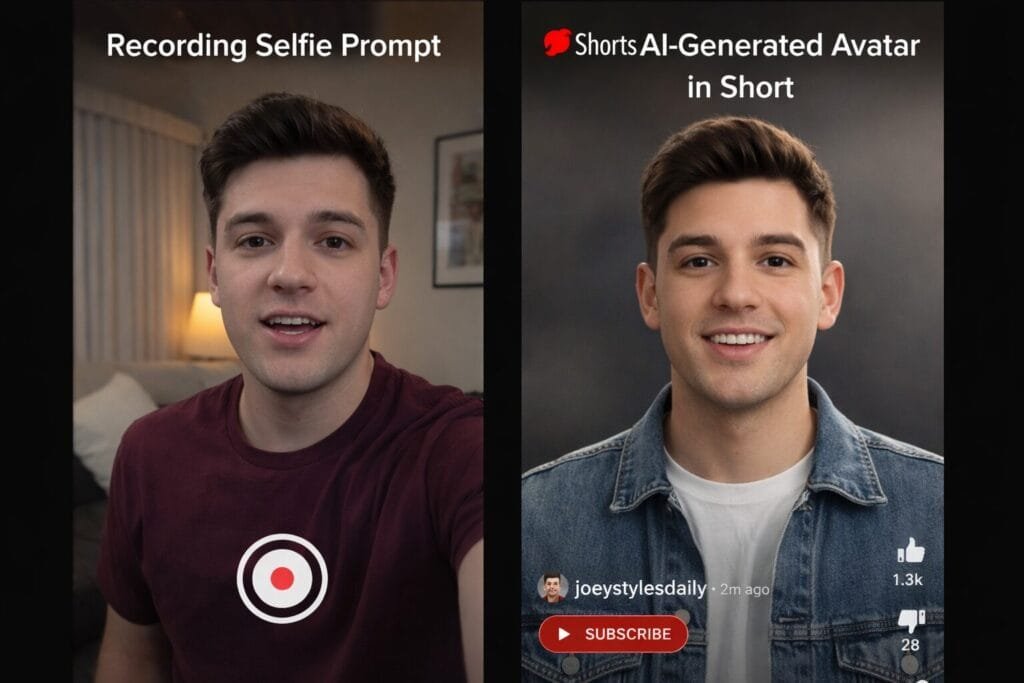

- Step 1 — Live Selfie Capture: You record a short video of your face while reading a series of on-screen prompts. This captures your facial features and voice patterns.

- Step 2 — AI Model Generation: Google’s Veo technology processes the recording and builds a photorealistic digital clone.

- Step 3 — Text-to-Video Creation: You type a prompt describing the video you want. The AI generates a Short featuring your avatar, up to 8 seconds per clip.

- Step 4 — Remix Option: You can also add your avatar to existing eligible Shorts by tapping “Remix” and then “Reimagine” with your avatar selected.

The YouTube Shorts AI system runs on Google’s generative AI infrastructure. The output is designed to be indistinguishable from real video at first glance — which is exactly what makes it both powerful and controversial.

Current Limitations

The feature is not without boundaries. Here is what is restricted right now:

| Limitation | Details |

|---|---|

| Clip Duration | Maximum 8 seconds per generated clip |

| Age Restriction | Users must be 18 or older |

| Geographic Availability | Global rollout except Europe |

| Avatar Ownership | Only the creator who made the avatar can use it |

| Unused Avatars | May be deleted after a set period of inactivity |

| Platform Access | Available in YouTube app and YouTube Create only |

| Content Type | Shorts only — not available for long-form YouTube videos |

These are reasonable guardrails for a launch. But the real question is whether they hold up once millions of users start pushing boundaries.

Safety, Ethics, and Platform Safeguards

YouTube has layered several safety measures into the system:

- Ownership Lock: Your avatar is tied to your account. No one else can use your digital clone to generate content.

- AI Labeling: All avatar-generated videos carry visible disclosures that the content is AI-generated.

- SynthID Watermarks: Google’s SynthID technology embeds invisible watermarks into the generated video for detection and traceability.

- C2PA Metadata: Content credentials following the C2PA standard are attached, providing a chain of provenance.

- Biometric Data Handling: The selfie and voice recording are used only for avatar creation, according to YouTube.

On paper, this is one of the more responsible AI rollouts from a major platform. But “on paper” and “in practice” are very different things.

The Big Question: Is It Really Safe?

Here is where the optimism runs into hard data.

According to the 2026 International AI Safety Report, deepfake risks are escalating fast. Some numbers that should concern everyone:

| Deepfake Risk Metric | Data Point |

|---|---|

| Deepfake videos that are pornographic | 96% |

| Popular “nudify” apps targeting women | 19 out of 20 |

| People who misidentify AI text as human-written | 77% |

| Listeners who mistake AI voices for real speakers | 80% |

YouTube’s own deepfake detection tool — the “likeness detection” feature — was expanded in March 2026 to cover politicians, government officials, and journalists.

That expansion happened because AI-generated impersonations were becoming a real threat to elections and public trust.

Security experts have also flagged a deeper concern: by submitting your biometric data (face and voice) to Google for avatar creation, you may be unknowingly enabling future AI model training on that data.

As one cybersecurity expert put it, “creators need to think carefully about whether they want their face controlled by a platform rather than owned by themselves.”

Industry Impact: The Rise of AI-Assisted Content

YouTube’s move is not happening in isolation. The entire content creation industry is shifting toward AI-assisted workflows.

The YouTube Shorts AI avatar tool is just one piece of a much larger shift. Across the industry, AI-powered platforms are replacing technical skill barriers with simple text prompts. Motion design tools generate animations in seconds. Voice cloning services replicate speech patterns from short samples. Text-to-video models produce clips that once required entire production teams.

YouTube’s AI avatar feature follows the same logic. You do not need a camera crew, a script, or editing software. You need a prompt and 30 seconds. The YouTube Shorts AI system handles the rest.

This is the new reality of the creator economy: AI is not just assisting creators — it is becoming the creator.

Competitive Landscape: YouTube vs TikTok vs Instagram

YouTube is not the only platform investing in AI creator tools. Here is how the major players compare:

| Feature | YouTube | TikTok | |

|---|---|---|---|

| AI Avatar/Clone | Yes (Veo-powered, April 2026) | AI effects and filters, no full avatar clone yet | No native avatar clone |

| AI Video Generation | Text-to-video via Veo | Limited AI editing tools | AI-powered Reels suggestions |

| AI Motion & Animation | YouTube Shorts AI integrates avatar + Veo pipeline | Built-in AI effects | Basic AI filters |

| AI Content Labels | SynthID + C2PA + visible disclosure | AI-generated content labels | AI-generated content labels |

| Creator Access | 18+, global (except Europe) | Varies by feature | Varies by feature |

YouTube is clearly making the most aggressive push. By integrating full avatar generation directly into its platform — not as a third-party plugin but as a native feature — YouTube is positioning itself as the default platform for AI-powered content creation.

Impact on the Creator Economy

The implications for creators are massive:

Scaling content production. A single creator using YouTube Shorts AI can now produce dozens of Shorts per day without being on camera. Combined with AI animation tools for intros and motion graphics, the entire production pipeline can be AI-driven.

New monetization angles. Creators who struggle with camera presence can now participate in the Shorts economy. Faceless channels can suddenly have a “face” — an AI-generated one.

The authenticity problem. Here is the trade-off nobody is talking about enough. Audiences follow creators because of a perceived human connection. When that “human” is an AI clone reading a script it never wrote, what happens to trust? What happens to the parasocial relationship that drives engagement?

This is not a hypothetical question. It is the central tension of AI-generated content, and YouTube just made it mainstream.

Audience Trust and Engagement Challenges

The transparency debate comes down to a simple question: will viewers care that the person on screen is not real?

Early data suggests most people cannot tell the difference. The 2026 AI Safety Report found that 80% of listeners mistake AI voices for real ones. If that holds for video, YouTube’s AI disclosure labels may be the only thing standing between a viewer and a deception they never notice.

Some creators argue that labels are enough — as long as the content is clearly marked, the audience can decide. Critics counter that labels get ignored, especially on fast-scrolling short-form platforms where the average watch time is under 10 seconds.

The long-term risk is platform credibility. If YouTube Shorts AI floods the platform with content that is hard to distinguish from real content, user trust in the entire ecosystem erodes. That is a problem YouTube cannot label its way out of.

Regulation and Policy Outlook

Governments are watching. The EU’s AI Act already imposes transparency requirements on AI-generated content — which may explain why YouTube excluded Europe from the initial rollout.

In the US, several states have introduced deepfake legislation, but there is no federal standard yet. The gap between what AI can do and what the law addresses is growing faster than regulators can respond.

YouTube Shorts AI self-regulation through SynthID and C2PA is a start, but platform-level safeguards are not a substitute for legal frameworks. If a creator’s AI avatar is stolen, manipulated, or used in a way that causes harm, the legal recourse is unclear.

Future Outlook: Fully AI-Generated Social Media?

The trajectory is clear. If YouTube is offering 8-second AI avatar clips today, full-length AI-generated videos are coming. If creators can clone themselves today, entire AI-run channels are coming tomorrow.

AI tools are already automating the visual layer — motion graphics, animated text, branded content — in seconds. YouTube Shorts AI automates the human layer with photorealistic avatar clones. Stack these together and you have a content production pipeline that requires zero on-camera time, zero editing skill, and minimal creative input.

The opportunities are real: accessibility, scale, cost reduction. The risks are equally real: misinformation, identity theft, erosion of trust, and a content ecosystem where nothing you see is guaranteed to be real.

Conclusion: Innovation vs. Responsibility

The YouTube Shorts AI avatar feature is the biggest shift in content creation since the platform introduced Shorts. It lowers barriers, expands access, and gives creators tools that were science fiction five years ago.

But YouTube Shorts AI also normalizes deepfake technology at a scale no platform has attempted before. The safeguards are promising but unproven. The regulatory landscape is unprepared. And the long-term effects on audience trust are unknown.

The bottom line: AI is no longer assisting creators — it is becoming them. Whether that is a revolution or a reckoning depends entirely on what happens next.

FAQ: YouTube Shorts AI Deepfake Feature

1. What is YouTube’s new AI deepfake feature in Shorts?

YouTube’s new feature allows creators to generate AI-powered avatars of themselves that can appear in Shorts videos without needing to record new footage. It uses facial and voice data to create realistic digital versions of creators.

2. How does YouTube’s AI avatar system work?

Creators record a short video and voice sample, which the AI uses to build a digital clone. This avatar can then be used to generate new video clips automatically.

3. Can anyone use YouTube’s deepfake feature?

Currently, the feature is being rolled out in limited regions and may only be available to selected creators during its early stages.

4. Is YouTube’s AI deepfake tool safe?

YouTube has added safeguards like ownership restrictions, watermarking, and AI disclosure labels. However, concerns about misuse and impersonation still remain.

Disclaimer

This article is for informational and educational purposes only. The views expressed are based on publicly available information as of April 10, 2026. This article does not constitute professional, legal, or financial advice. The mention of any tools, platforms, or brands — including YouTube, Google, TikTok, or Instagram — is for informational context only and does not imply endorsement or affiliation. AI-generated deepfake technology carries significant ethical and legal risks. Readers are encouraged to evaluate these technologies critically and stay informed about relevant regulations in their jurisdiction. All statistics cited are sourced from publicly available reports and may be subject to updates or corrections.

Sources:

- YouTube Shorts will use AI to make avatars that look and sound like you — 9to5Google

- Google introduces AI-generated avatars to YouTube Shorts — Engadget

- YouTube will soon let creators make Shorts with their own AI likeness — TechCrunch

- YouTube Lets Users Make Photorealistic AI Avatars For Shorts — Dataconomy

- YouTube’s new AI deepfake tracking tool is alarming experts — CNBC

- 2026 International AI Safety Report — Creati.ai

- YouTube expands deepfake detection tool to politicians — Axios

- YouTube Shorts debuts new AI video creation features — NotebookCheck