Inside the global debate reshaping courtrooms from Stuttgart to Shenzhen — where algorithms meet due process, and the soul of justice hangs in the balance.

By Global Justice Desk ● March 30, 2026 ● ~1,900 words ● AI in Judiciary

— Introduction

AI in Courts | Courts Embrace AI – But Draw the Line

In the marble halls of justice, something unprecedented is happening.

Judges who once spent hours sorting through paper files are now working alongside algorithms.

Clerks who manually transcribed witness testimony are being replaced by AI-powered speech-to-text systems.

And in courts from Stuttgart to Shenzhen, the race to reduce case backlogs through artificial intelligence is accelerating with breathtaking speed.

Yet despite the technological surge, courts worldwide are drawing a firm, deliberate line.

AI in the judiciary may assist, organise, and accelerate — but it shall not decide.

The global consensus, echoed from UNESCO’s corridors to the EU’s legislative chambers, is unambiguous: justice must remain irreversibly human.

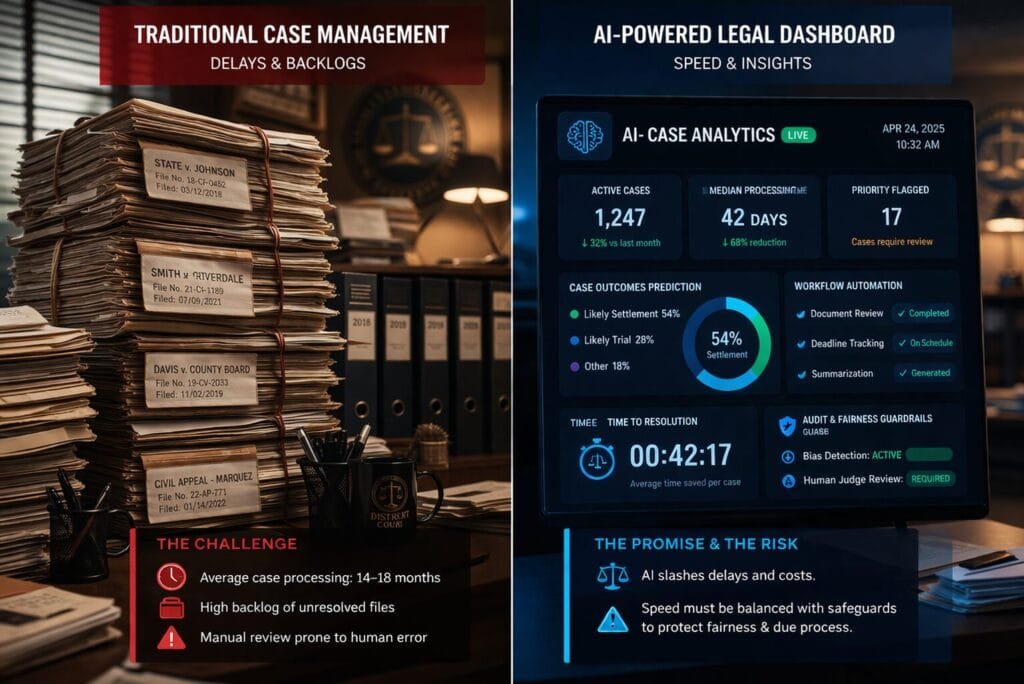

The numbers tell the story of urgency.

In one South Asian nation, over 53 million cases are currently pending, some unresolved for more than three decades.

In Germany’s Stuttgart Higher Regional Court alone, a backlog of more than 10,000 cases overwhelmed judges who spent hours manually reviewing hundreds-of-page electronic pleadings. The pressure for faster justice is real, existential, and growing.

| 53M+ Cases pending in one South Asian country | 44% Judicial operators using AI tools globally (UNESCO) | 300% Productivity increase via AI assistant Prometea (Argentina) | 2T Chinese legal characters in Shenzhen’s AI training corpus |

— Boundaries

Where Courts Draw the Line: AI vs. Human Judgment

The fundamental question defining the future of AI in the judiciary is deceptively simple: where should algorithmic assistance stop and human judgment begin? Courts worldwide are answering with increasing clarity — AI can process, sort, and suggest, but it cannot feel, empathise, or bear moral responsibility.

Chief Justice John Roberts of the U.S. Supreme Court articulated this tension memorably, noting a persistent public perception of a “human-AI fairness gap” — a widespread belief that human adjudications, for all their flaws, are inherently fairer than whatever the machine generates. This gap is not merely sentimental; it reflects something deep about what justice requires.

At least at present, studies show a persistent public perception of a ‘human-AI fairness gap,’ reflecting the view that human adjudications, for all of their flaws, are fairer than whatever the machine spits out.”— Chief Justice John Roberts, U.S. Supreme Court

Human judges bring more than information to the bench. They bring discretion, contextual awareness, the ability to read a defendant’s remorse, and the moral courage to rule against popular sentiment. An algorithm, trained on historical patterns, can only reflect what came before — and history is not always just.

— Speed vs. Justice

Speed vs. Justice: Can Faster Courts Stay Fair?

The efficiency gains from AI in the judiciary are hard to dispute.

In Argentina, the virtual AI assistant Prometea has helped legal professionals process nearly 490 cases per month, compared to just 130 before its introduction — a nearly 300% surge in productivity.

In Brazil, the VICTOR AI system evaluates Supreme Court appeals in seconds; the same task takes a court clerk 44 minutes.

In Egypt, automated transcription introduced in 2024 has reduced hearing delays dramatically.

Germany’s experience is instructive.

At Stuttgart’s Higher Regional Court, AI with natural language understanding was deployed to categorise cases from hundreds-of-page pleadings.

The system significantly reduced processing time, freeing judges to focus on substance over administration — a genuine victory for justice delivery.

Yet the risk of speed worship is real.

Due process is not merely a formality; it is the architecture of fairness.

When courts rush to clear dockets, defendants may receive less time, less scrutiny, and less empathy.

The question is not whether AI can accelerate — it demonstrably can — but whether courts can accelerate without sacrificing the deliberateness that justice demands.

— Hidden Risks

The Hidden Risk: Bias, Errors, and Black-Box AI

Perhaps no challenge in AI in the judiciary is more troubling than the problem of algorithmic bias.

AI systems, trained on historical court data, can encode the prejudices of the past into the decisions of the future.

The infamous COMPAS tool — used in several U.S. jurisdictions to assess reoffending likelihood — became a touchstone for this concern, with critics arguing its outputs carried racially skewed predictions that influenced sentencing.

In Spain, the VioGén risk-assessment tool for domestic violence cases illustrates the “automation bias” problem starkly: reports indicate that in 95% of cases, officers follow VioGén’s risk classification without modification, despite having the legal discretion to override it.

An algorithm designed to support decision-making has, in practice, become the decision-maker.

Then there is the “black box” problem.

When India’s SUPACE system highlights certain legal precedents for a judge, neither the judge nor the litigant knows precisely how those cases were prioritised.

Scholars warn that this opacity makes errors difficult to detect and may subtly shape judicial thinking if algorithmic suggestions are perceived as neutral truth.

They are not neutral — they are statistical. And statistics, divorced from context, can be deeply unjust.

KEY RISKS OF AI IN THE JUDICIARY

| Risk | Description | Real-World Example | Severity |

| Algorithmic Bias | AI trained on biased historical data may reproduce systemic inequalities | COMPAS tool, USA | HIGH |

| Black-Box Opacity | Judges cannot fully explain or scrutinise AI-driven recommendations | SUPACE, India; VioGén, Spain | HIGH |

| Automation Bias | Humans uncritically defer to AI outputs, reducing independent judgment | VioGén (95% adherence), Spain | MED-HIGH |

| AI Hallucinations | Generative AI produces false citations or non-existent case references | Delhi High Court ChatGPT incident, 2025 | MEDIUM |

| Deepfake Evidence | AI-generated media submitted as authentic evidence | Mendones v. Cushman & Wakefield, CA 2025 | HIGH |

| Eroded Trust | Public may reject AI-influenced verdicts as illegitimate | Human-AI fairness gap research | MEDIUM |

— Transparency

Can AI Be Audited? Transparency and Legal Challenges

The push for explainable AI in the judiciary is gaining legislative momentum.

UNESCO launched its Guidelines for the Use of AI Systems in Courts and Tribunals in December 2025, offering 15 principles centred on auditability, human oversight, and information security.

These guidelines, developed with input from over 100 contributors across 41 countries, represent the most comprehensive international framework for judicial AI to date.

The European Union has gone further legislatively.

Its Artificial Intelligence Act explicitly classifies AI systems used in law enforcement and judicial contexts as “high risk,” mandating rigorous testing, mandatory transparency disclosures, and continuous human oversight.

Violations can incur penalties reaching into tens of millions of euros.

A critical legal question remains unresolved: should defendants have the right to challenge AI-driven inputs that influenced their case? Legal scholars argue strongly for this right, noting that the inability to interrogate an algorithm’s reasoning fundamentally undermines the right to a fair hearing — a cornerstone of justice in virtually every legal tradition.

— Global Case Studies

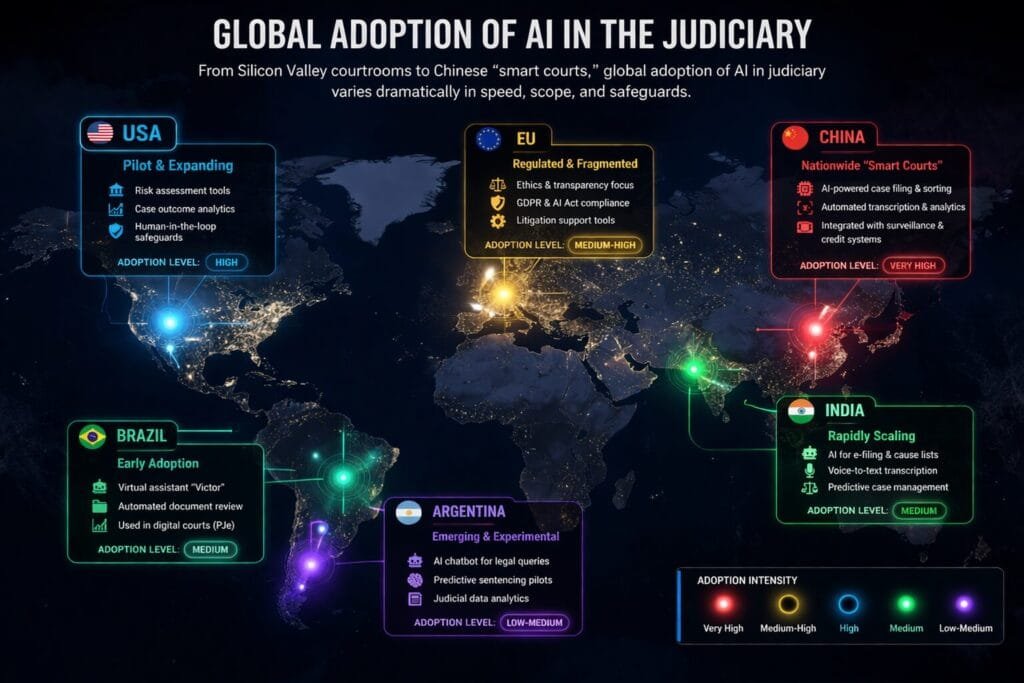

Global Case Studies: How Countries Are Using AI in Courts

🇺🇸 United States — Risk Assessment and Deepfake Reckoning

The U.S. uses AI primarily for legal research, case scheduling, and risk-assessment tools like COMPAS. However, 2025 brought new challenges: the Mendones v. Cushman & Wakefield case became one of the first known instances of a deepfake video submitted as authentic courtroom evidence. An Arizona court also allowed an AI-generated avatar of a murder victim to deliver a sentencing impact statement. The U.S. Judicial Conference is actively considering amendments to the Federal Rules of Evidence.

🇨🇳 China — Smart Courts and Integrated AI Reasoning

China has moved furthest toward integrated AI-assisted judicial reasoning. Shenzhen’s Intelligent Adjudication System, trained on two trillion Chinese legal characters, assists judges across civil and commercial cases — summarising case facts, generating hearing prompts, and drafting preliminary judgments that judges then review and refine. Internet courts process millions of minor disputes largely online, with AI handling triage, scheduling, and preliminary analysis.

🇪🇺 European Union — Regulatory Leadership

Europe leads on regulation. The EU AI Act’s high-risk classification for judicial AI demands transparency, testing, and ongoing human oversight. Germany is piloting AI for document categorisation and judgment drafting under strict ethical guardrails. Croatia launched ANON in January 2025 — an AI tool for automatic anonymisation and publication of court decisions.

🇮🇳 India — Cautious Progress Amid Huge Backlogs

India’s Supreme Court uses SUPACE for legal research support. Kerala mandated the AI speech-to-text tool Adalat.AI for all subordinate courts from November 2025. Yet controversy struck when the Delhi High Court encountered pleadings with ChatGPT-hallucinated case citations — including references to non-existent cases. India’s approach now emphasises that AI is an administrative assistant, with a strict prohibition on generative AI drafting judgments.

GLOBAL AI JUDICIARY ADOPTION SNAPSHOT — 2026

| Country/Region | Primary AI Use | Key Tool/System | Regulatory Stance | Stage |

| 🇺🇸 United States | Risk assessment, legal research, scheduling | COMPAS, various LLMs | Fragmented by state | Active / Contested |

| 🇨🇳 China | Judicial reasoning, case drafting, internet courts | Shenzhen Intelligent Adjudication System | State-led expansion | Advanced |

| 🇪🇺 EU / Germany | Document categorisation, judgment drafting | Frauke (IBM), ANON (Croatia) | Strict (EU AI Act) | Cautious / Regulated |

| 🇮🇳 India | Transcription, legal research, e-filing | SUPACE, Adalat.AI | Developing guidelines | Early / Evolving |

| 🇧🇷 Brazil | Appeals screening, AI judicial assistants | VICTOR, Chat-JT | Federal oversight | Active / Expanding |

| 🇦🇷 Argentina | Case processing, constitutional rights analysis | Prometea | Nationally coordinated | Productive |

— Changing Roles

The Changing Role of Judges and Lawyers

The legal profession is not being replaced — it is being transformed. For judges, AI functions as a research librarian, a document sorter, and a scheduling assistant.

The judge remains the sole decision-maker, the moral authority, and the constitutional cornerstone.

What changes is the quality of preparation: judges with AI assistance can access relevant precedents faster, review evidence more thoroughly, and dedicate cognitive energy to the complexity that matters.

For lawyers, the shift is equally profound. AI tools now draft initial briefs, conduct exhaustive case-law searches in seconds, predict likely judicial interpretations, and identify procedural vulnerabilities.

Yet the attorney remains essential — for strategy, for advocacy, for the human connection to a client’s story that no algorithm can replicate.

New skills are becoming mandatory in AI-powered legal systems: understanding the limitations of AI outputs, critically evaluating algorithmic suggestions, and maintaining the professional discipline to override a machine when justice demands it.

The legal curriculum is already changing; law schools worldwide are integrating legal technology literacy as a core competency.

— Regulation

Regulation and Accountability: Who Takes the Blame?

The accountability gap in AI in the judiciary is perhaps the most pressing unresolved question of the decade.

When an AI-assisted sentence proves unjust — when a risk score was algorithmically inflated, when a precedent search missed a crucial case — who bears responsibility? The software vendor? The court administrator? The judge who accepted the recommendation?

Current legal frameworks are largely silent on this.

The EU AI Act begins to address liability for high-risk AI systems, but enforcement mechanisms remain nascent.

UNESCO’s December 2025 guidelines urge states to establish clear chains of accountability, audit mechanisms, and redress procedures for AI-related judicial errors.

REGULATORY FRAMEWORKS GOVERNING AI IN JUDICIARY (2025–2026)

| Framework | Issuing Body | Key Requirements | Enforcement |

| EU AI Act | European Union | High-risk classification; mandatory transparency; human oversight | Legally Binding |

| UNESCO AI Guidelines for Courts | UNESCO (Dec 2025) | 15 principles; auditability; human decision primacy | Voluntary / Benchmark |

| OECD AI Ethics Guidelines | OECD | Proportionality, fairness, human rights in AI judicial use | Advisory |

| Singapore Model AI Governance | Govt of Singapore | Accountability, data protection, responsible innovation | National Policy |

| UK Judicial AI Guidance | Courts & Tribunals Judiciary (UK) | Caution advised; AI not recommended for legal reasoning tasks | Guidance Only |

— The Future

The Future of AI in Judiciary: Assistant or Co-Judge?

Over the next decade, researchers project three distinct scenarios for AI in the judiciary. In the first — the “Administrative AI” scenario — AI remains confined to clerical tasks: transcription, scheduling, document analysis, and research support.

Human judges retain full decision-making authority; algorithms are tools, not authorities. This is the current dominant model, and arguably the safest.

In the second — the “Recommendation Engine” scenario — AI begins providing structured sentencing suggestions, bail recommendations, and case outcome predictions, which judges must explicitly accept, modify, or reject with written justification. This requires robust explainability, audit trails, and defendant rights of challenge.

In the third and most controversial — the “Co-Judge” scenario — AI systems participate meaningfully in routine, low-stakes decisions such as minor traffic violations, small claims, and administrative disputes.

Human judges retain oversight and appellate review. China’s smart courts most closely approach this model today.

Whether public trust will keep pace with technological capability is the defining variable. Research consistently shows that people trust human adjudications more than algorithmic ones — even when AI demonstrates greater statistical accuracy.

In a democracy, legitimate justice requires not only correct outcomes but perceived fairness. And that perception, for now, remains firmly human.

— Conclusion

Justice Must Stay Human

The integration of AI in the judiciary is not a question of if but of how.

The technology exists, the backlog pressures are real, and the efficiency gains are documented. Argentina processes nearly four times as many cases with AI assistance.

Brazil screens appeals in seconds instead of minutes.

Germany categorises thousands of cases without exhausting its judges. These are genuine victories for access to justice.

But justice is not merely an efficiency problem.

It is a moral undertaking, rooted in human dignity, contextual wisdom, and institutional trust built over centuries.

An algorithm cannot look a defendant in the eye and measure remorse. It cannot sense that a written statement obscures a deeper truth.

It cannot make the courageous decision that logic suggests is wrong but conscience insists is right.

The rule of law must guide technology — not the other way around.”— Justice Speakers Institute, Global AI in Courts Report, 2025

The global legal community’s emerging consensus is clear: AI is a powerful, valuable, and necessary assistant in the modern courtroom.

It can sharpen the minds of judges, lighten the burdens of clerks, and widen access to justice for millions who currently wait years for their day in court.

But it cannot replace the irreplaceable — the human capacity to judge with wisdom, fairness, and accountability.

Speed matters. Efficiency matters. But in the end, fairness matters more.

The question for every court system in every nation is the same: can you harness the power of AI while preserving the soul of justice? That answer will define the legal landscape of the 21st century.

FAQ Section

Q1. Can AI replace judges in the future?

AI is highly unlikely to fully replace judges. While it can assist with legal research, document analysis, and case predictions, judicial decisions require human reasoning, ethical judgment, and contextual understanding. Courts worldwide emphasize that AI should act as a support tool—not a decision-maker.

Q2. Is AI already being used in courts today?

Yes, AI is already used in several legal systems. Courts use it for tasks like case management, legal research, document review, and even risk assessments in some jurisdictions. However, final rulings are still made by human judges.

Q3. What are the risks of AI in legal systems?

The biggest risks include algorithmic bias, lack of transparency (black-box systems), data privacy concerns, and potential errors in decision support. If not properly regulated, AI could unintentionally influence unfair outcomes in legal cases.

Q4. How can AI improve court efficiency?

AI can significantly reduce case backlogs by automating repetitive tasks such as sorting documents, analyzing legal precedents, and scheduling cases. This allows courts to process cases faster while enabling judges to focus on critical decision-making.

Q5. Is AI bias a serious concern in judicial decisions?

Yes, AI bias is a major concern. Since AI systems learn from historical data, they can inherit existing biases present in legal records. This makes it crucial to audit AI systems regularly and ensure transparency to maintain fairness in judicial outcomes.

Suggested Reading:-

Meta Faces Privacy Lawsuit Over AI Smart Glasses and User Data

| EDITORIAL DISCLAIMER Informational Purpose Only: This article is produced for informational, educational, and journalistic purposes only. It does not constitute legal advice, legal opinion, or a formal assessment of any specific AI system, judicial institution, or legal framework. Readers should consult qualified legal professionals for advice pertaining to specific legal matters. Accuracy Notice: While every effort has been made to ensure accuracy, the field of AI in the judiciary is evolving rapidly. Regulatory frameworks, case outcomes, and technological deployments described herein reflect information available as of March 2026 and may have changed subsequently. No Endorsement: References to specific AI tools, court systems, or international organisations (including UNESCO, OECD, the European Union, and others) do not constitute endorsement of those entities or their policies. AI Assistance Disclosure: Portions of this article’s research and drafting process were supported by AI tools. All content has been reviewed, verified, and edited by human editorial staff prior to publication. Copyright: All content © 2026 Global Justice Report. Reproduction in whole or in part without written permission is prohibited. |

ABOUT THE AUTHOR

Animesh Kullu | DailyAIWire

News Editor & AI Correspondent

Editor covering the intersection of artificial intelligence, enterprise software, and the global technology industry. Animesh holds a Certification from Journalism Now.