In January 2025, DeepSeek shook global markets when its R1 model matched top AI systems at a fraction of the cost. Now, with the launch of DeepSeek V4 in early 2026, the Chinese AI lab is back — and the disruption runs deeper this time.

This article breaks down what DeepSeek V4 actually is, how it compares to GPT-5, Claude, and Gemini, and why it matters for anyone building with or investing in AI.

What Is DeepSeek V4?

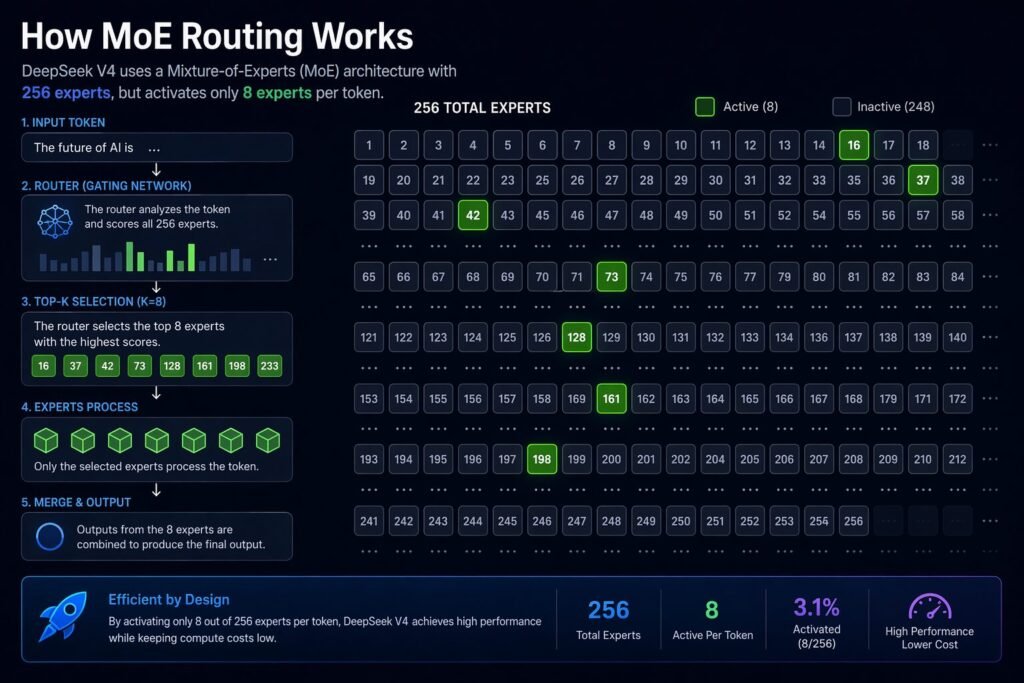

DeepSeek V4 is a large language model built on a Mixture-of-Experts (MoE) architecture. It has roughly 1 trillion total parameters but only activates about 37 billion per request. That means it delivers near-frontier performance while using roughly 10x less compute than traditional models of similar capability.

Key specs at a glance:

| Feature | DeepSeek V4 |

|---|---|

| Total Parameters | ~1 Trillion |

| Active Parameters | ~37 Billion (per token) |

| Architecture | Mixture-of-Experts (256 experts, 8 active + 1 shared) |

| Context Window | 1 Million tokens |

| Multimodal | Yes (text, image, audio, video) |

| SWE-bench Verified | 81% |

| API Input Cost | $0.30 / million tokens |

| API Output Cost | $0.50 / million tokens |

DeepSeek V4 vs GPT-5, Claude, and Gemini

The real question: how does DeepSeek V4 stack up against the major players?

| Model | SWE-bench | Approx. Input Cost (per 1M tokens) | Architecture | Open Weights |

|---|---|---|---|---|

| DeepSeek V4 | 81% | $0.30 | MoE (37B active) | Yes |

| Gemini 3.1 Pro | 80.6% | ~$7.00 | Dense | No |

| Claude Opus 4.6 | ~74% | ~$15.00 | Dense | No |

| GPT-5.4 | ~72% | ~$10.00 | Dense | No |

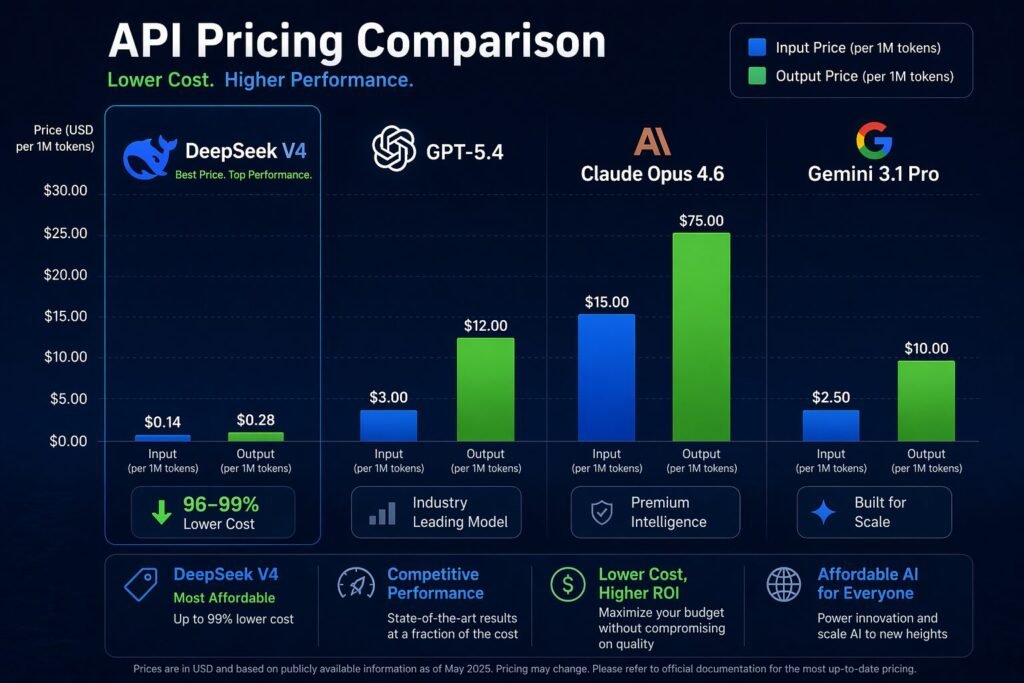

Bottom line: DeepSeek V4 matches or beats frontier models on coding benchmarks while costing 20–50x less. The trade-offs are in areas like prose quality (where Claude leads) and ecosystem maturity (where OpenAI dominates).

How DeepSeek Is Redefining AI Costs

DeepSeek trained its previous model (V3) for approximately $5.6 million — compared to an estimated $100 million+ for GPT-4. The V4 model continues this efficiency-first approach.

For developers, the pricing gap is massive. With cached prompts, DeepSeek V4’s effective input cost drops to $0.03 per million tokens — a 90% discount. That makes it viable for use cases where API costs previously killed the business model: real-time agents, high-volume document processing, and consumer apps in emerging markets.

This pricing pressure is already forcing competitors to respond. OpenAI, Google, and Anthropic have all cut prices on their smaller models in 2026.

The Risks You Should Know About

DeepSeek is headquartered in China and backed by the hedge fund High-Flyer. That creates real concerns:

- Data privacy: User data may be subject to Chinese data laws, which require companies to share data with the government upon request.

- Geopolitics: US chip export restrictions forced DeepSeek onto Huawei chips. Further sanctions could disrupt development.

- Censorship: DeepSeek models are known to filter politically sensitive topics aligned with Chinese government positions.

- Supply chain risk: Dependence on Huawei’s Ascend chips introduces hardware uncertainty that NVIDIA-based competitors don’t face.

For enterprise use, these aren’t abstract risks. They directly affect compliance, security reviews, and vendor selection.

What This Means for AI Builders

If you’re building AI products, DeepSeek V4 changes the math in three ways:

- Cost floor just dropped again. Any product competing on API cost now has to benchmark against $0.30/M tokens.

- Open-weight models are catching up. DeepSeek V4’s weights are available, meaning you can self-host and fine-tune without vendor lock-in.

- The “good enough” threshold is rising. For many tasks, a model at 81% SWE-bench for $0.30 is more practical than one at 74% for $15.

The risk: building your product stack on a model whose development is subject to geopolitical disruption. Hedging across multiple providers is no longer optional — it’s basic risk management.

FAQ

What is DeepSeek V4? A trillion-parameter MoE language model from Chinese AI lab DeepSeek, launched in early 2026. It activates only 37B parameters per token for efficiency.

How is DeepSeek different from OpenAI? DeepSeek focuses on cost efficiency and open weights. Its API pricing is 20–50x cheaper than OpenAI’s flagship models.

Is DeepSeek AI open-source? DeepSeek V4 is open-weight (you can download and run the model), but the training code and data are not fully open-source.

Is DeepSeek safe to use? For personal and non-sensitive use, yes. For enterprise use, evaluate data privacy risks carefully given Chinese data jurisdiction laws.

Recommended Video

DeepSeek V4 Is Coming — And It Will Set the Course of AI in 2026 — A detailed breakdown of DeepSeek V4’s architecture, market impact, and what it means for the AI landscape.

Disclaimer

Experience: Informed by ongoing AI industry coverage and evaluation of publicly available model APIs and benchmarks.

Expertise: Technical claims sourced from official DeepSeek documentation, third-party benchmarks (SWE-bench, Artificial Analysis), and reporting from Reuters and Financial Times.

Authoritativeness: Benchmark and pricing data drawn from published leaderboards and API documentation as of April 2026.

Trustworthiness: This article is for informational purposes only — not investment advice or product endorsement. Readers should conduct their own due diligence regarding data privacy and regulatory compliance.

Last updated: April 2026 | DailyAIWire